A-MEM: Zettelkasten for agents

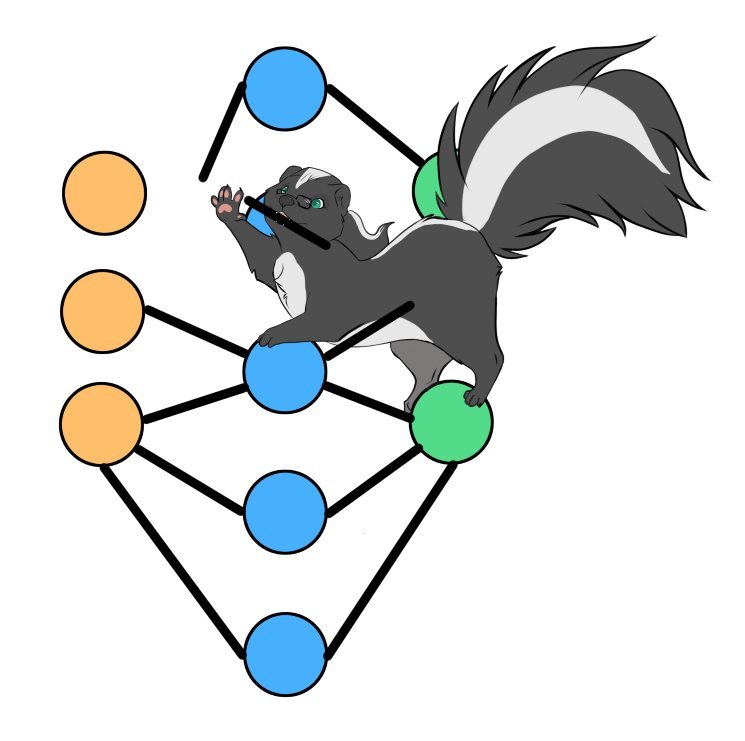

An actively managed memory network for LLMs

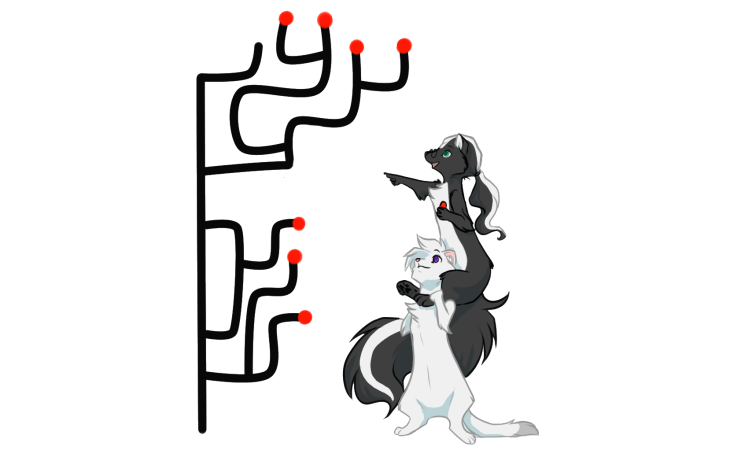

The Zettelkasten method is a note-taking system invented by Niklas Luhmann, a German sociologist who used it to produce over 70 books and hundreds of articles over his career. The idea is that every thought becomes a card, and the cards are linked to each other associatively rather than filed into categories. Luhmann described his 90,000-card system as a “conversation partner” because the network of connections would surface relationships he hadn’t consciously made.

I’ve tried it myself. It wasn’t for me. The problem wasn’t the concept (which is genuinely elegant) but the upkeep. For Zettelkasten to work, you need to invest time at every step: writing atomic notes, choosing the right links, revisiting old cards to update them. If you slack off on any of those, the whole system degrades. Notes pile up unlinked, connections become stale, and you end up with something worse than a simple chronological notebook because now you expect the links to be there and they aren’t.

So when I saw that A-MEM takes this exact method and hands the upkeep to an LLM, my first reaction was: genius or dumb? Let’s find out!